sklearn.ensemble.HistGradientBoostingClassifier#

- class sklearn.ensemble.HistGradientBoostingClassifier(loss='log_loss', *, learning_rate=0.1, max_iter=100, max_leaf_nodes=31, max_depth=None, min_samples_leaf=20, l2_regularization=0.0, max_features=1.0, max_bins=255, categorical_features='warn', monotonic_cst=None, interaction_cst=None, warm_start=False, early_stopping='auto', scoring='loss', validation_fraction=0.1, n_iter_no_change=10, tol=1e-07, verbose=0, random_state=None, class_weight=None)[source]#

Histogram-based Gradient Boosting Classification Tree.

This estimator is much faster than

GradientBoostingClassifierfor big datasets (n_samples >= 10 000).This estimator has native support for missing values (NaNs). During training, the tree grower learns at each split point whether samples with missing values should go to the left or right child, based on the potential gain. When predicting, samples with missing values are assigned to the left or right child consequently. If no missing values were encountered for a given feature during training, then samples with missing values are mapped to whichever child has the most samples.

This implementation is inspired by LightGBM.

Read more in the User Guide.

New in version 0.21.

- Parameters:

- loss{‘log_loss’}, default=’log_loss’

The loss function to use in the boosting process.

For binary classification problems, ‘log_loss’ is also known as logistic loss, binomial deviance or binary crossentropy. Internally, the model fits one tree per boosting iteration and uses the logistic sigmoid function (expit) as inverse link function to compute the predicted positive class probability.

For multiclass classification problems, ‘log_loss’ is also known as multinomial deviance or categorical crossentropy. Internally, the model fits one tree per boosting iteration and per class and uses the softmax function as inverse link function to compute the predicted probabilities of the classes.

- learning_ratefloat, default=0.1

The learning rate, also known as shrinkage. This is used as a multiplicative factor for the leaves values. Use

1for no shrinkage.- max_iterint, default=100

The maximum number of iterations of the boosting process, i.e. the maximum number of trees for binary classification. For multiclass classification,

n_classestrees per iteration are built.- max_leaf_nodesint or None, default=31

The maximum number of leaves for each tree. Must be strictly greater than 1. If None, there is no maximum limit.

- max_depthint or None, default=None

The maximum depth of each tree. The depth of a tree is the number of edges to go from the root to the deepest leaf. Depth isn’t constrained by default.

- min_samples_leafint, default=20

The minimum number of samples per leaf. For small datasets with less than a few hundred samples, it is recommended to lower this value since only very shallow trees would be built.

- l2_regularizationfloat, default=0

The L2 regularization parameter. Use

0for no regularization (default).- max_featuresfloat, default=1.0

Proportion of randomly chosen features in each and every node split. This is a form of regularization, smaller values make the trees weaker learners and might prevent overfitting. If interaction constraints from

interaction_cstare present, only allowed features are taken into account for the subsampling.New in version 1.4.

- max_binsint, default=255

The maximum number of bins to use for non-missing values. Before training, each feature of the input array

Xis binned into integer-valued bins, which allows for a much faster training stage. Features with a small number of unique values may use less thanmax_binsbins. In addition to themax_binsbins, one more bin is always reserved for missing values. Must be no larger than 255.- categorical_featuresarray-like of {bool, int, str} of shape (n_features) or shape (n_categorical_features,), default=None

Indicates the categorical features.

None : no feature will be considered categorical.

boolean array-like : boolean mask indicating categorical features.

integer array-like : integer indices indicating categorical features.

str array-like: names of categorical features (assuming the training data has feature names).

"from_dtype": dataframe columns with dtype “category” are considered to be categorical features. The input must be an object exposing a__dataframe__method such as pandas or polars DataFrames to use this feature.

For each categorical feature, there must be at most

max_binsunique categories. Negative values for categorical features encoded as numeric dtypes are treated as missing values. All categorical values are converted to floating point numbers. This means that categorical values of 1.0 and 1 are treated as the same category.Read more in the User Guide.

New in version 0.24.

Changed in version 1.2: Added support for feature names.

Changed in version 1.4: Added

"from_dtype"option. The default will change to"from_dtype"in v1.6.- monotonic_cstarray-like of int of shape (n_features) or dict, default=None

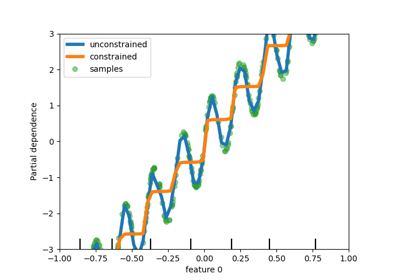

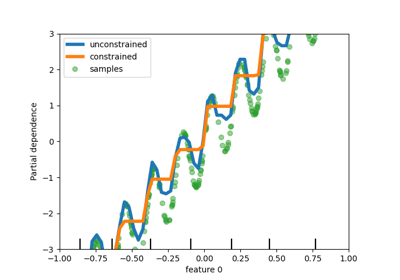

Monotonic constraint to enforce on each feature are specified using the following integer values:

1: monotonic increase

0: no constraint

-1: monotonic decrease

If a dict with str keys, map feature to monotonic constraints by name. If an array, the features are mapped to constraints by position. See Using feature names to specify monotonic constraints for a usage example.

The constraints are only valid for binary classifications and hold over the probability of the positive class. Read more in the User Guide.

New in version 0.23.

Changed in version 1.2: Accept dict of constraints with feature names as keys.

- interaction_cst{“pairwise”, “no_interactions”} or sequence of lists/tuples/sets of int, default=None

Specify interaction constraints, the sets of features which can interact with each other in child node splits.

Each item specifies the set of feature indices that are allowed to interact with each other. If there are more features than specified in these constraints, they are treated as if they were specified as an additional set.

The strings “pairwise” and “no_interactions” are shorthands for allowing only pairwise or no interactions, respectively.

For instance, with 5 features in total,

interaction_cst=[{0, 1}]is equivalent tointeraction_cst=[{0, 1}, {2, 3, 4}], and specifies that each branch of a tree will either only split on features 0 and 1 or only split on features 2, 3 and 4.New in version 1.2.

- warm_startbool, default=False

When set to

True, reuse the solution of the previous call to fit and add more estimators to the ensemble. For results to be valid, the estimator should be re-trained on the same data only. See the Glossary.- early_stopping‘auto’ or bool, default=’auto’

If ‘auto’, early stopping is enabled if the sample size is larger than 10000. If True, early stopping is enabled, otherwise early stopping is disabled.

New in version 0.23.

- scoringstr or callable or None, default=’loss’

Scoring parameter to use for early stopping. It can be a single string (see The scoring parameter: defining model evaluation rules) or a callable (see Defining your scoring strategy from metric functions). If None, the estimator’s default scorer is used. If

scoring='loss', early stopping is checked w.r.t the loss value. Only used if early stopping is performed.- validation_fractionint or float or None, default=0.1

Proportion (or absolute size) of training data to set aside as validation data for early stopping. If None, early stopping is done on the training data. Only used if early stopping is performed.

- n_iter_no_changeint, default=10

Used to determine when to “early stop”. The fitting process is stopped when none of the last

n_iter_no_changescores are better than then_iter_no_change - 1-th-to-last one, up to some tolerance. Only used if early stopping is performed.- tolfloat, default=1e-7

The absolute tolerance to use when comparing scores. The higher the tolerance, the more likely we are to early stop: higher tolerance means that it will be harder for subsequent iterations to be considered an improvement upon the reference score.

- verboseint, default=0

The verbosity level. If not zero, print some information about the fitting process.

- random_stateint, RandomState instance or None, default=None

Pseudo-random number generator to control the subsampling in the binning process, and the train/validation data split if early stopping is enabled. Pass an int for reproducible output across multiple function calls. See Glossary.

- class_weightdict or ‘balanced’, default=None

Weights associated with classes in the form

{class_label: weight}. If not given, all classes are supposed to have weight one. The “balanced” mode uses the values of y to automatically adjust weights inversely proportional to class frequencies in the input data asn_samples / (n_classes * np.bincount(y)). Note that these weights will be multiplied with sample_weight (passed through the fit method) ifsample_weightis specified.New in version 1.2.

- Attributes:

- classes_array, shape = (n_classes,)

Class labels.

- do_early_stopping_bool

Indicates whether early stopping is used during training.

n_iter_intNumber of iterations of the boosting process.

- n_trees_per_iteration_int

The number of tree that are built at each iteration. This is equal to 1 for binary classification, and to

n_classesfor multiclass classification.- train_score_ndarray, shape (n_iter_+1,)

The scores at each iteration on the training data. The first entry is the score of the ensemble before the first iteration. Scores are computed according to the

scoringparameter. Ifscoringis not ‘loss’, scores are computed on a subset of at most 10 000 samples. Empty if no early stopping.- validation_score_ndarray, shape (n_iter_+1,)

The scores at each iteration on the held-out validation data. The first entry is the score of the ensemble before the first iteration. Scores are computed according to the

scoringparameter. Empty if no early stopping or ifvalidation_fractionis None.- is_categorical_ndarray, shape (n_features, ) or None

Boolean mask for the categorical features.

Noneif there are no categorical features.- n_features_in_int

Number of features seen during fit.

New in version 0.24.

- feature_names_in_ndarray of shape (

n_features_in_,) Names of features seen during fit. Defined only when

Xhas feature names that are all strings.New in version 1.0.

See also

GradientBoostingClassifierExact gradient boosting method that does not scale as good on datasets with a large number of samples.

sklearn.tree.DecisionTreeClassifierA decision tree classifier.

RandomForestClassifierA meta-estimator that fits a number of decision tree classifiers on various sub-samples of the dataset and uses averaging to improve the predictive accuracy and control over-fitting.

AdaBoostClassifierA meta-estimator that begins by fitting a classifier on the original dataset and then fits additional copies of the classifier on the same dataset where the weights of incorrectly classified instances are adjusted such that subsequent classifiers focus more on difficult cases.

Examples

>>> from sklearn.ensemble import HistGradientBoostingClassifier >>> from sklearn.datasets import load_iris >>> X, y = load_iris(return_X_y=True) >>> clf = HistGradientBoostingClassifier().fit(X, y) >>> clf.score(X, y) 1.0

Methods

Compute the decision function of

X.fit(X, y[, sample_weight])Fit the gradient boosting model.

Get metadata routing of this object.

get_params([deep])Get parameters for this estimator.

predict(X)Predict classes for X.

Predict class probabilities for X.

score(X, y[, sample_weight])Return the mean accuracy on the given test data and labels.

set_fit_request(*[, sample_weight])Request metadata passed to the

fitmethod.set_params(**params)Set the parameters of this estimator.

set_score_request(*[, sample_weight])Request metadata passed to the

scoremethod.Compute decision function of

Xfor each iteration.Predict classes at each iteration.

Predict class probabilities at each iteration.

- decision_function(X)[source]#

Compute the decision function of

X.- Parameters:

- Xarray-like, shape (n_samples, n_features)

The input samples.

- Returns:

- decisionndarray, shape (n_samples,) or (n_samples, n_trees_per_iteration)

The raw predicted values (i.e. the sum of the trees leaves) for each sample. n_trees_per_iteration is equal to the number of classes in multiclass classification.

- fit(X, y, sample_weight=None)[source]#

Fit the gradient boosting model.

- Parameters:

- Xarray-like of shape (n_samples, n_features)

The input samples.

- yarray-like of shape (n_samples,)

Target values.

- sample_weightarray-like of shape (n_samples,) default=None

Weights of training data.

New in version 0.23.

- Returns:

- selfobject

Fitted estimator.

- get_metadata_routing()[source]#

Get metadata routing of this object.

Please check User Guide on how the routing mechanism works.

- Returns:

- routingMetadataRequest

A

MetadataRequestencapsulating routing information.

- get_params(deep=True)[source]#

Get parameters for this estimator.

- Parameters:

- deepbool, default=True

If True, will return the parameters for this estimator and contained subobjects that are estimators.

- Returns:

- paramsdict

Parameter names mapped to their values.

- property n_iter_#

Number of iterations of the boosting process.

- predict(X)[source]#

Predict classes for X.

- Parameters:

- Xarray-like, shape (n_samples, n_features)

The input samples.

- Returns:

- yndarray, shape (n_samples,)

The predicted classes.

- predict_proba(X)[source]#

Predict class probabilities for X.

- Parameters:

- Xarray-like, shape (n_samples, n_features)

The input samples.

- Returns:

- pndarray, shape (n_samples, n_classes)

The class probabilities of the input samples.

- score(X, y, sample_weight=None)[source]#

Return the mean accuracy on the given test data and labels.

In multi-label classification, this is the subset accuracy which is a harsh metric since you require for each sample that each label set be correctly predicted.

- Parameters:

- Xarray-like of shape (n_samples, n_features)

Test samples.

- yarray-like of shape (n_samples,) or (n_samples, n_outputs)

True labels for

X.- sample_weightarray-like of shape (n_samples,), default=None

Sample weights.

- Returns:

- scorefloat

Mean accuracy of

self.predict(X)w.r.t.y.

- set_fit_request(*, sample_weight: bool | None | str = '$UNCHANGED$') HistGradientBoostingClassifier[source]#

Request metadata passed to the

fitmethod.Note that this method is only relevant if

enable_metadata_routing=True(seesklearn.set_config). Please see User Guide on how the routing mechanism works.The options for each parameter are:

True: metadata is requested, and passed tofitif provided. The request is ignored if metadata is not provided.False: metadata is not requested and the meta-estimator will not pass it tofit.None: metadata is not requested, and the meta-estimator will raise an error if the user provides it.str: metadata should be passed to the meta-estimator with this given alias instead of the original name.

The default (

sklearn.utils.metadata_routing.UNCHANGED) retains the existing request. This allows you to change the request for some parameters and not others.New in version 1.3.

Note

This method is only relevant if this estimator is used as a sub-estimator of a meta-estimator, e.g. used inside a

Pipeline. Otherwise it has no effect.- Parameters:

- sample_weightstr, True, False, or None, default=sklearn.utils.metadata_routing.UNCHANGED

Metadata routing for

sample_weightparameter infit.

- Returns:

- selfobject

The updated object.

- set_params(**params)[source]#

Set the parameters of this estimator.

The method works on simple estimators as well as on nested objects (such as

Pipeline). The latter have parameters of the form<component>__<parameter>so that it’s possible to update each component of a nested object.- Parameters:

- **paramsdict

Estimator parameters.

- Returns:

- selfestimator instance

Estimator instance.

- set_score_request(*, sample_weight: bool | None | str = '$UNCHANGED$') HistGradientBoostingClassifier[source]#

Request metadata passed to the

scoremethod.Note that this method is only relevant if

enable_metadata_routing=True(seesklearn.set_config). Please see User Guide on how the routing mechanism works.The options for each parameter are:

True: metadata is requested, and passed toscoreif provided. The request is ignored if metadata is not provided.False: metadata is not requested and the meta-estimator will not pass it toscore.None: metadata is not requested, and the meta-estimator will raise an error if the user provides it.str: metadata should be passed to the meta-estimator with this given alias instead of the original name.

The default (

sklearn.utils.metadata_routing.UNCHANGED) retains the existing request. This allows you to change the request for some parameters and not others.New in version 1.3.

Note

This method is only relevant if this estimator is used as a sub-estimator of a meta-estimator, e.g. used inside a

Pipeline. Otherwise it has no effect.- Parameters:

- sample_weightstr, True, False, or None, default=sklearn.utils.metadata_routing.UNCHANGED

Metadata routing for

sample_weightparameter inscore.

- Returns:

- selfobject

The updated object.

- staged_decision_function(X)[source]#

Compute decision function of

Xfor each iteration.This method allows monitoring (i.e. determine error on testing set) after each stage.

- Parameters:

- Xarray-like of shape (n_samples, n_features)

The input samples.

- Yields:

- decisiongenerator of ndarray of shape (n_samples,) or (n_samples, n_trees_per_iteration)

The decision function of the input samples, which corresponds to the raw values predicted from the trees of the ensemble . The classes corresponds to that in the attribute classes_.

- staged_predict(X)[source]#

Predict classes at each iteration.

This method allows monitoring (i.e. determine error on testing set) after each stage.

New in version 0.24.

- Parameters:

- Xarray-like of shape (n_samples, n_features)

The input samples.

- Yields:

- ygenerator of ndarray of shape (n_samples,)

The predicted classes of the input samples, for each iteration.

- staged_predict_proba(X)[source]#

Predict class probabilities at each iteration.

This method allows monitoring (i.e. determine error on testing set) after each stage.

- Parameters:

- Xarray-like of shape (n_samples, n_features)

The input samples.

- Yields:

- ygenerator of ndarray of shape (n_samples,)

The predicted class probabilities of the input samples, for each iteration.

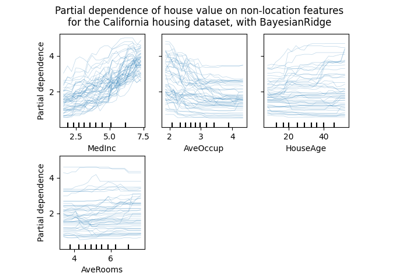

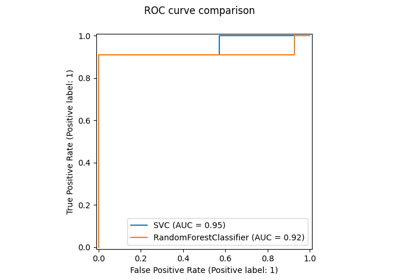

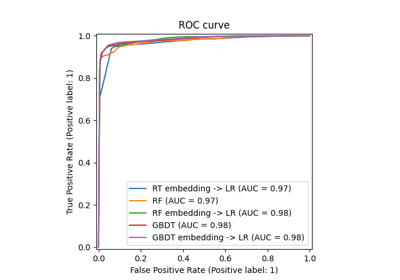

Examples using sklearn.ensemble.HistGradientBoostingClassifier#

Comparing Random Forests and Histogram Gradient Boosting models